Field Notes

May 2026 Update to the AI Bottlenecks Trade

Fushimi Inari Taisha, Kyoto, Japan. April 2026

Fushimi Inari Taisha, Kyoto, Japan. April 2026

Update on memory

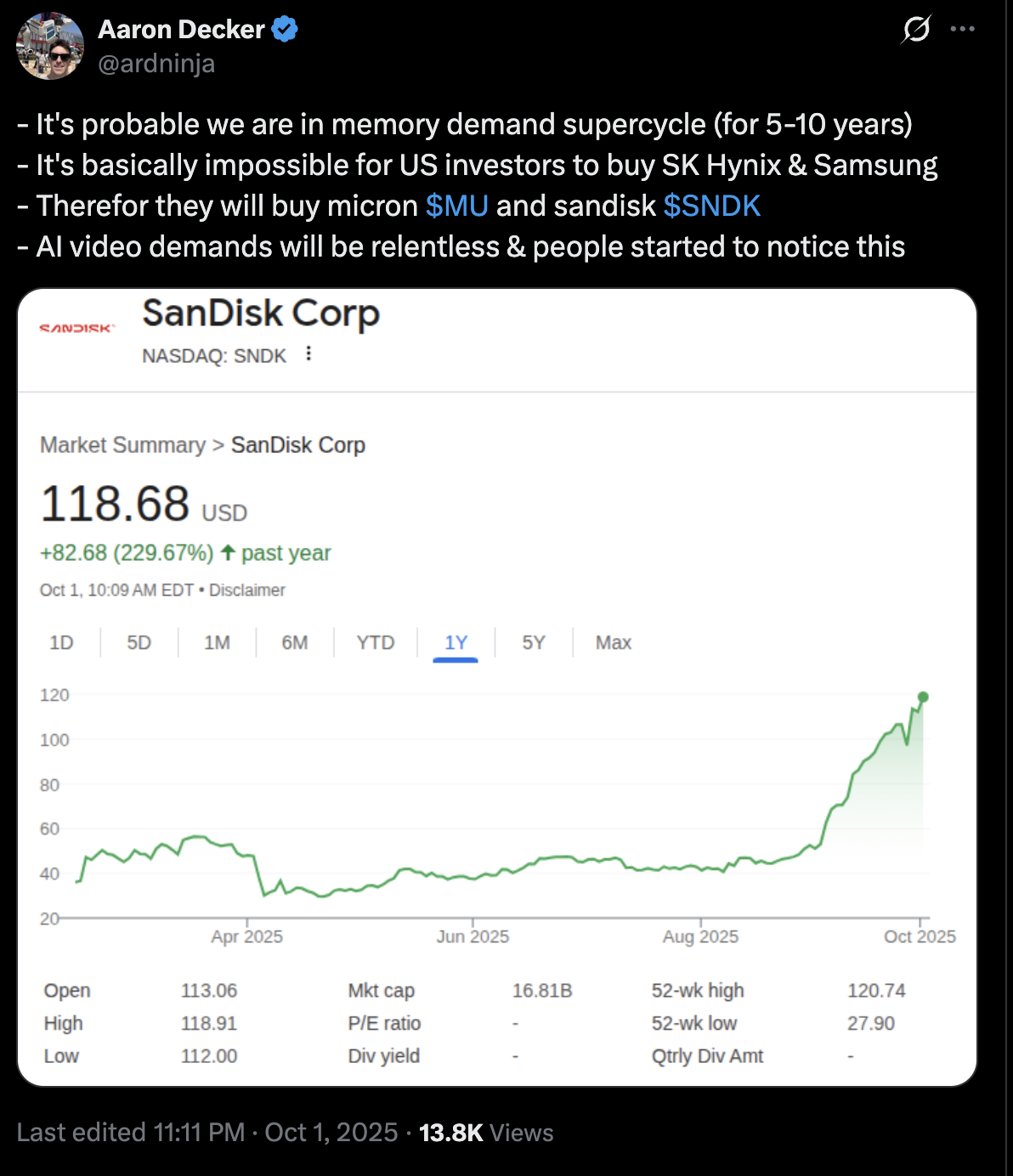

On October 1st, 2025, I tweeted something to the effect of “we are in a memory supercycle, Micron and Sandisk should benefit”. I did this to mark the fact that I entered the trade, and I also bought a couple of other things. In total I went long: Sandisk, Micron, SK Hynix, ONTO, and Teradyne. Another couple of trades around the same time I opened were: long AMD, and long CRWV but I will come back to those.

Note:

- SK Hynix is the HBM leader (high bandwidth memory - i.e. very high bandwidth RAM)

- Micron does HBM and NAND (flash memory)

- Sandisk does NAND

- Teradyne makes testing equipment (if you ship more memory you need more testing capacity).

Why memory?

Well, one thing I knew was that serving AI models requires a lot of memory. Actually, it wasn’t until I sat down and did some research and wrote a few articles up that I really understood the degree to which loading LLM model weights + KV cache was extremely memory bandwidth constrained.

I won’t rehash it here but in summary: larger models require a large amount of fast memory to use for inference. More than fits on a single chip, and this is an issue.

This means that because even the largest H100s, Blackwells, and Vera Rubins cannot serve a single model per chip, you need to serve these in clusters. There is a great demand for more and faster memory per chip, but it’s like an order of magnitude more needed per chip.

I.e. - you often need 10 or more chips to serve a single SOTA LLM. The newer LLMs with much larger parameter counts may need 100 H100s or more to serve for inference, simply due to the limits on the amount of memory (96 GB) on a single chip, and we know there are 10 trillion parameter models that might require 10 TB of HBM to serve for inference (with cache).

Add to that: the more you cache (to save costs), the more memory you might ideally like to make use of.

Memory current state

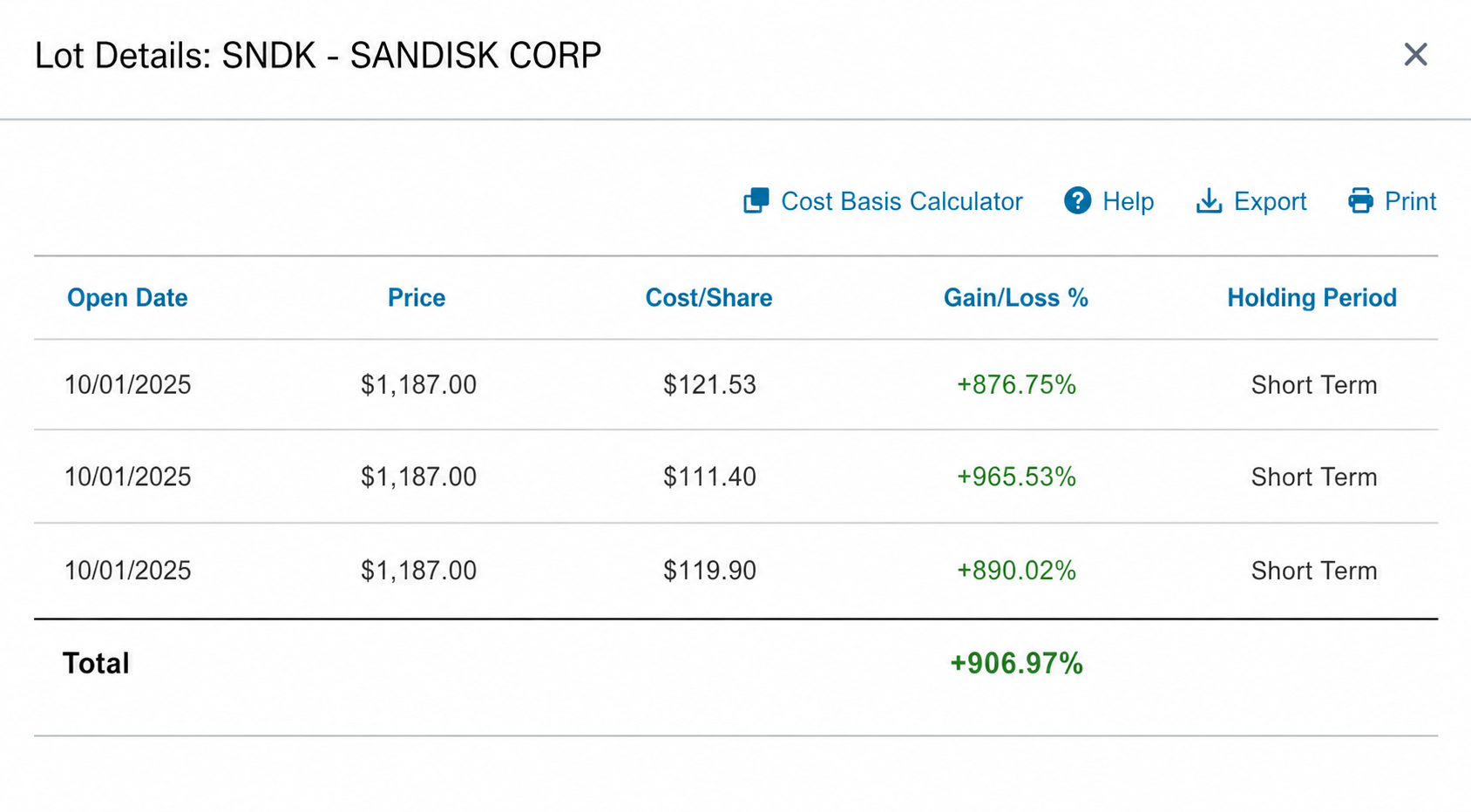

Micron has done well. But, Sandisk, as you probably know, has risen very fast:

Yes that 900% is real. I did trim something like 20% of my shares over the last 2 months, but I’m still long the bulk of my Sandisk and here is why:

- Supply still cannot meet demand, potentially until 2028

- HBF (High Bandwidth Flash) is a new idea to try to do high-bandwidth but high-latency memory to be used in inference, and Sandisk is leading this effort.

- Already, it’s clear you can use fast NAND in some configs to help with caching in the newer Nvidia chips.

As for Micron & Hynix - I already explained they make HBM and HBM is one of THE PRIMARY BOTTLENECKS in actually using LLMs at scale.

Micron has not gone up quite as much. A couple of reasons for this: Sandisk was basically incorrectly priced, and the new developments around HBF and using fast local NAND flash for cache in newer Nvidia chips were not priced in last October.

But for Micron the story was more clear. The market was thinking yes HBM is needed but we don’t know how durable this demand will be, and we still think Micron is cyclical. So the price was already higher.

But is that still true?

I think after last quarter, I will just put this up visually so you can see: the forward P/E is something like 5 now. Lol, yes it’s single digits.

AI demand due to agents

Let’s move on to AI demand: the thing driving all the bottleneck trades is ultimately real-world demand for tokens.

Something happened last fall: real-world demand went vertical because people started to figure out that Claude Code was good now and you could accelerate a ton of real-world work in an agentic fashion.

To be super clear:

A - old way of prompting: you craft a prompt and get a solution to your problem with a single inference call.

B - new way of prompting: you craft a prompt to an agent harness that repeatedly calls a model and makes tool calls until it’s done with its task. Each turn of inference may use many tokens. I have had Codex go for 45 minutes on a problem in this “agent mode”.

Claude Code, Codex, Opencode, and Cursor agent mode use probably 100x the tokens vs the old way of single-shot prompt coding.

I have been exclusively using Codex & Claude Code instead of the old single-shot Cursor stuff for almost a year now, so I knew about this before other people did. This is part of the reason why I was so confident token demand would explode and entered a bunch of long trades last year related to AI demand.

Leaked revenue numbers - demand has accelerated

It’s been leaked recently that Anthropic is at $44 billion ARR.

Back in August I put a table together trying to game out AI revenues and I put these projections out:

- Anthropic at $20 billion ARR by end of 2026

- OpenAI at $46 billion ARR by end of 2026

We don’t know OpenAI’s numbers but my guess is that they are materially close to the $46 billion ARR run rate with their recent pivot towards enterprise and success with Codex + GPT 5.5, which is bonkers smart. And Anthropic being at more than double their bull case EOY 2026 run rate is hugely informative.

So we can assume demand is very strong, as the new agentic workflows would suggest.

CPU demand

Another surprise bottleneck that has popped up is in CPUs.

This is easily explained by looking at OpenClaw as an example: if all of a sudden you have a bunch of always-on agents monitoring things and performing actions, that requires more CPUs to run on.

Q1 2026: Intel said the AI shift “from foundational models to inference to agentic” is “significantly increasing the need for Intel’s CPUs,” and CFO David Zinsner cited the “growing and essential role of the CPU in the AI era” plus “unprecedented demand for silicon.” Intel’s Data Center and AI revenue was $5.1B, up 22% YoY.

AMD Q4 2025 results: AMD Data Center revenue hit a record $5.4B, up 39% YoY, driven by EPYC CPUs and Instinct GPUs. Full-year Data Center revenue was $16.6B, up 32% YoY, reflecting growth across both EPYC and Instinct.

Another aspect of CPU demand is a result of more complex reinforcement training workflows.

Google Cloud Next 2026: Google said GPUs/TPUs need high-performance CPU services for “complex logic, tool-calls, and feedback loops” around models. It specifically called out CPU instances from Axion, Intel, and AMD for RL tasks such as “reward calculation, agent orchestration, and nested visualization.”

Anyway, two very simple ways to express this trade:

- Long AMD

- Long Intel

Personally, I think Intel is a bit more of a long shot and AMD is a way safer play here. It’s an OpenAI proxy via their deal from November (so they are extremely incentivized to get the share price to $600), it’s also a GPU maker and its GPUs are more optimized for inference, and it has very good positioning in the CPU market.

But if Intel does pull a rabbit out of a hat and become the TSMC of America, well the upside is huge here.

Datacenter Capacity: the neoclouds trade

The next bottleneck is physical infrastructure: hosting and powering the GPUs and CPUs needed for these new, in-demand AI workloads.

Now, if we assume demand has pulled ahead of even our bullish expectations from last summer/fall, we should expect that there are issues getting enough datacenters built fast enough.

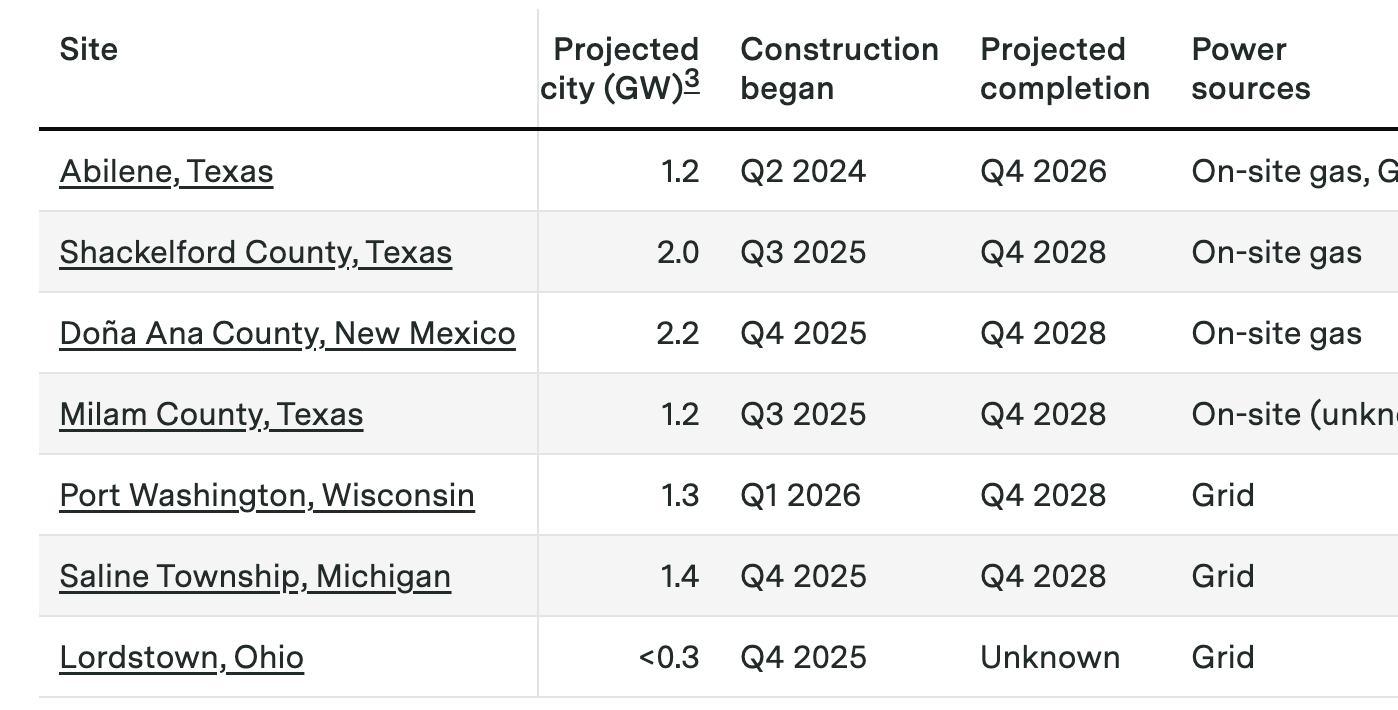

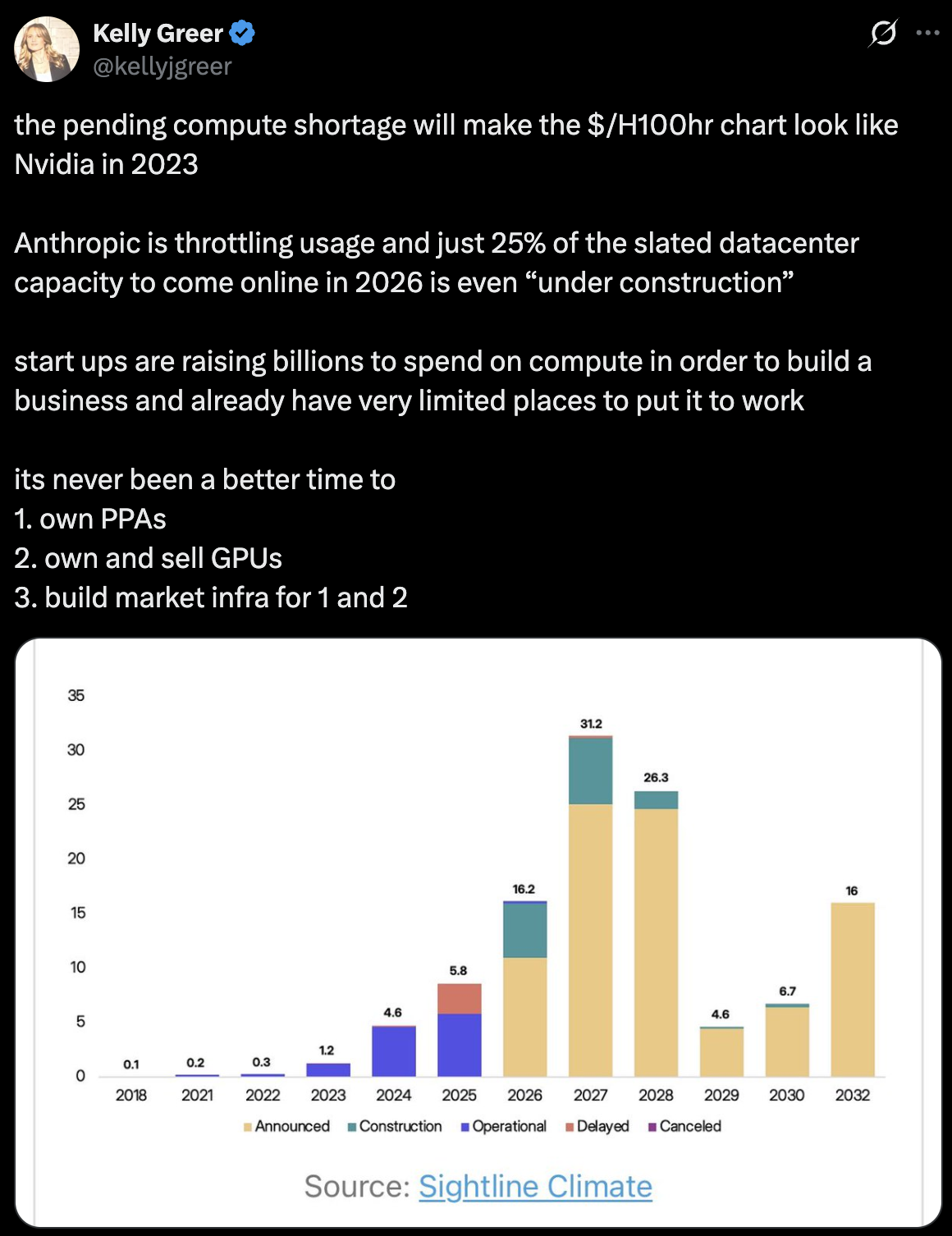

I think I can illustrate this issue quite simply. Here is a table from one of Epoch AI’s blogs following the progress of the OpenAI Stargate project.

Notice anything here?

There is a big gap until 2028…

Everyone started shoveling money into 3-year+ projects last year and now there will be a gap in capacity for the next couple years.

Who was ahead of this curve?

Why, the neoclouds of course! So you have two types of neoclouds:

- Coreweave, Nebius, Fluidstack, etc. They built capacity early specifically for AI.

- The ex-bitcoin miners converting capacity to AI hosting: IREN, Core Scientific, Cipher Digital. These guys already have powered shells and they are converting them.

I think once again, these things can probably re-rate higher as capacity is constrained over the next two-year period.

Let’s check our buddy Leopold’s portfolio:

You will notice several neoclouds in here:

- Coreweave - $CRWV

- Core Scientific - $CORZ

- IREN - $IREN

- Applied Digital - $APLD

- Cipher Digital - $CIFR

- Riot Platforms - $RIOT

- White Fiber - $WYFI

- Bitdeer - $BTDR

So to be honest I’m a little disappointed in this trade because a lot of these have not done much in recent months. I actually have been adding to my positions in Coreweave, Nebius, and Cipher but I’m thinking it’s going to take a little bit longer to play out.

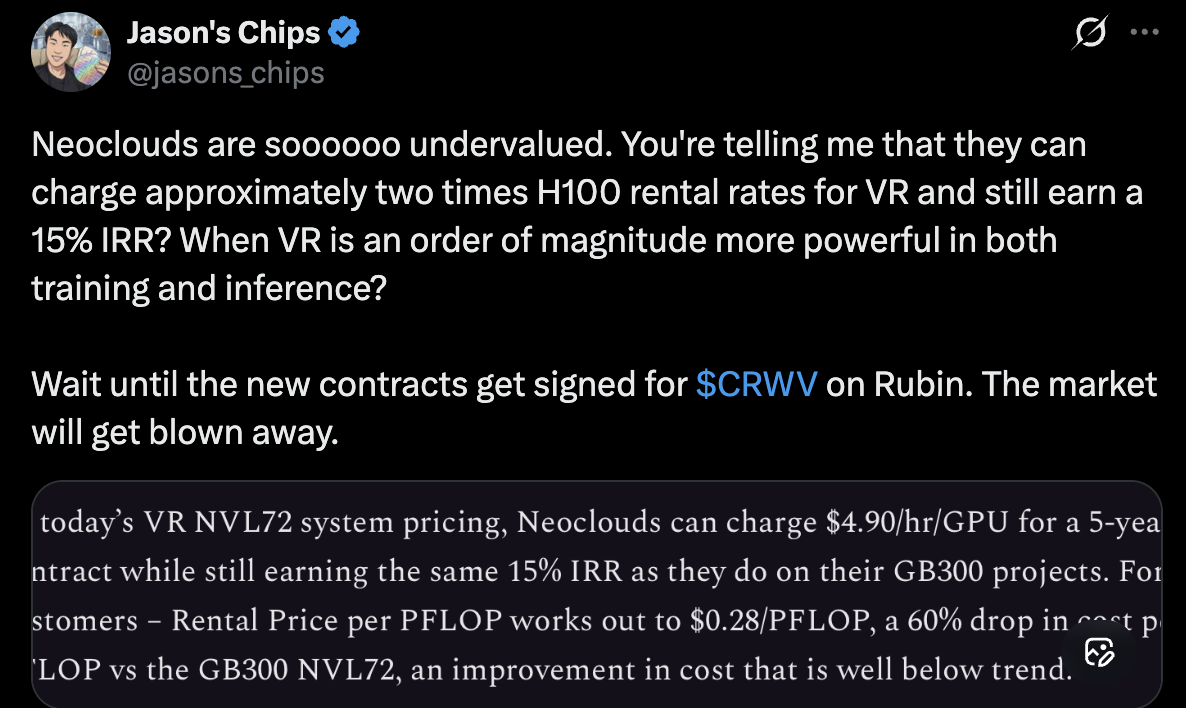

Neoclouds Bull Case

There are a couple of interesting things we could mention about why I think this is a good trade.

The first is demand: I think it’s pretty clear it’s still strong, which you can see in the leaked Anthropic revenue numbers and other shortages around memory and CPUs.

The second would be that the depreciation FUD has been credibly dismissed to some degree by the fact that H100 rental spot price (a 6-year-old GPU) has remained elevated.

The third is political: many jurisdictions are actively gumming up existing construction projects (see Lordstown, OH) and there are many pushing for “datacenter moratoriums” in parts of the USA, Canada, and Europe. That means anyone with existing powered shells and permits will be sitting on a valuable commodity that will increase in value as political pressure on new builds intensifies.

Photonics Update

I wrote about photonics back in February.

At this time I was long Tower Semi, which is a specialty fab company that does some photonics work.

In this trade you have a couple leaders like Lumentum $LITE and Coherent $COHR. Since I wrote about photonics being a clear bottleneck for bandwidth with serving and training big models, Lumentum has gone up about 100% and Coherent about 50%. Applied Optoelectronics, a smaller company which I didn’t mention, is up something like 350% since then.

So it’s pretty clear the market is still very hot on photonics being a bottleneck solve but unlike memory or CPUs, I think in this instance the market pricing is way, way ahead of actual revenues for these. So I will continue to wait and sit these out.

Conclusions

- Demand for AI tokens is strong and increasing because AI is useful and getting more useful. Anthropic is going nuts w/ usage.

- CPU demand increasing is an interesting new thing that has developed. Like AMD here.

- The neocloud trade has stalled out a bit but things could materially change if a real capacity shortage develops.

- Photonics is something to watch but I’m skeptical of chasing here.

- Memory is super in demand and the stocks are still priced like they will be cyclical and crash.

Of all of these if you are entering new trades here it seems like the most potential upside is the neoclouds? But also memory producers are still materially cheap vs literally everything else if you believe this cycle lasts several more years (I do). I do plan to increase my positions in smaller neoclouds that are owned by the Situational Awareness fund.

As always, not financial advice; for entertainment purposes only, I’m just talking about what I’m personally doing.